Many people are fed up with social networks.

Or rather with Facebook.

According to several articles like this one, Facebook lost percentage of daily usage this past year, even in the midst of a pandemic, when most of us have been glued to our screens more than ever.

The fact is that other platforms are rapidly gaining traction, such as TikTok, which came out of nowhere and already has over 1 billion active users.

So if you concentrate your marketing strategy on a couple of social platforms you run the risk of your efforts fading away as those networks lose popularity.

However, what is not going to disappear, at least not for a good number of years, are searches on Google or any other search engine.

Because in the end when we are interested in finding quick information about a service or a product we google it.

That is why it’s in your interest to work on your SEO and get your website to appear at the top of the results pages without having to pay a penny in ads.

What has changed in terms of SEO in recent months?

Search engine crawler bots (those little bits of software that navigate the vast sea of the internet to find relevant information) are becoming increasingly sophisticated.

Google’s BERT algorithm update at the end of 2019 showed us that Google is getting much better at understanding (through natural language processing) the context of users’ searches and the intent behind them.

This means that websites that publish content that matches what users are actually searching for rank higher in the results pages.

The key is to focus on the intent behind your prospects’ search, not on how often a specific keyword appears in the content.

In other words, bots know how to recognise phrases that respond to a search even if the words don’t exactly match the keyword in question.

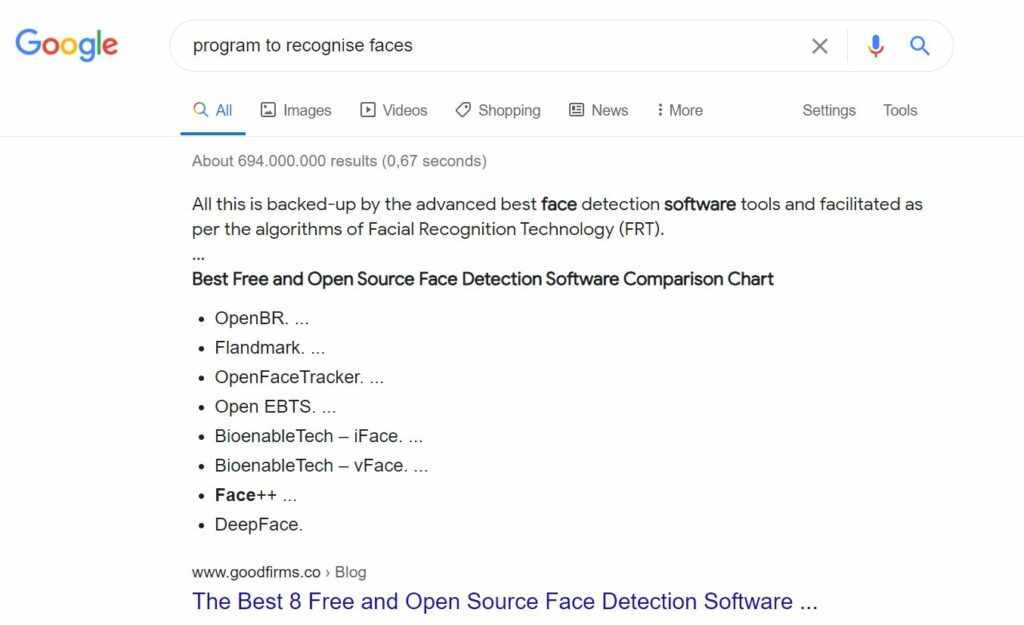

Look at a random example. I typed the words “program to recognise faces”.

However, the first result, which is also a featured snippet (I talk more about these snippets in this article) doesn’t mention the word “program” or “recognise” but the bots know that my intention is to find face detection software.

If you create content that naturally answers the questions your target audience is asking, you will have a much better chance of the bots finding your content and rewarding you by showing your page before others.

It’s no longer a question of repeating a keyword many times throughout a text as in the past, but rather that the information you offer is useful and provides answers.

It’s easier to rank for descriptive keywords that appeal to a wider audience.

For example, broad terms like “digital marketing techniques” or “tips for curly hair” may be very competitive, but they also receive a higher number of searches per month.

Let’s remember what Google’s bots like to keep them happy.

What is a #bot and how to win the #SEO battle. #contentmarketing Share on XThe importance of content structure

You have to make “life” easy for the bots. If they find it too complicated, they will go somewhere else.

Take care of those broken links on your website, the loading speed, your website’ structure needs to be simple, with as few sections as possible and with headlines and meta information that respond to your target audience’s searches.

This article I wrote last year on meta titles and meta descriptions and this other one on web structure are still totally relevant today.

Take a look at them to make sure you follow the basic principles of good SEO.

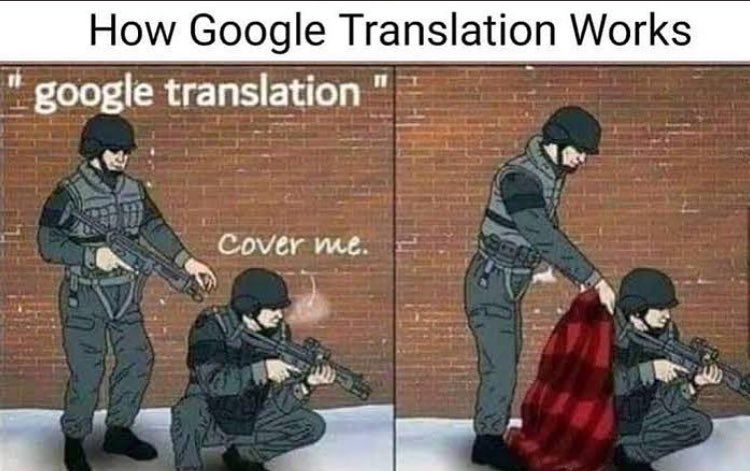

Translations done by humans

Machine translators are getting better and better. I use DeepL a lot.

But be careful, because they aren’t perfect. There are always words that are translated literally and lose all meaning, making the reading less enjoyable.

And what happens when what we read doesn’t make sense?

We get bored, leave it and go somewhere else where the information we are looking for is clear and pleasant to read.

And what happens when our page has a very high bounce rate?

(Bounce rate = people who land and leave the page too quickly)

Google bots think “OK, this page must be pretty bad because people don’t stay to read it. Then, we’ll leave it at the bottom”.

Copywriters specialised in localisation not only translate content correctly, but they use those terms that resonate better with the target audience.

The same goes for chatbots: they can be a great help, but they can also be the most frustrating thing in the world.

Who hasn’t ever encountered a chatbot that incessantly repeats a nonsense message and doesn’t answer our question at all?

If you decide to include a chatbot on your website, make sure it’s helpful and doesn’t cause friction.

And speaking of bots…

What is a #bot and how to win the #SEO battle. #contentmarketing Share on XWhat is a bot?

A bot is a software program that operates on the Internet and performs repetitive tasks.

While some bot traffic comes from good bots, bad bots can have a huge negative impact on a website.

More than half of the traffic on the Internet are bots that scan content, interact with web pages, chat with users or look for victims to attack…

Some bots are useful, such as search engine bots that index content for search or customer service bots that help users.

This technology, which is being developed with a natural language processing (NLP) algorithm, allows the chatbot to understand the users’ intent and respond accordingly.

Don’t robotise your chatbot

Personalisation is critical if you want your message to be compelling, so your chatbot also needs to have its own personality.

Design your bot to converse in a style and form that fits your buyer persona.

In case your brand voice is not too serious, this is the time to get creative. For example, there are websites that create characters for their chatbot with light-hearted dialogues that provoke a smile.

Animals, cartoon characters, anything goes to start a conversation with your prospects.

What you shouldn’t do is to leave all the weight of customer service in the hands of a chatbot.

These are used to answer easy and repetitive questions, but when a customer needs more specific assistance, make it easy for them to talk to a person right away and avoid episodes of frustration.

Types of bots

We have talk about the good bots but there are also other bots that are bad and scan the Internet for victims.

They are programmed to log into user accounts, scan the web for contact information to send spam or perform other malicious activities.

These bots duplicate content, spread spam content or carry out credential-stealing attacks.

Content duplication bots are often used to repurpose content for malicious purposes, such as duplicating content for SEO on websites that the attacker owns, violating copyrights, and stealing organic traffic.

To prevent these bad bots from attacking your website, make sure you always have up-to-date plugins and check which are the best security plugins for these cases. Here a list with some of them.

Conclusion

The human factor continues to win the battle against artificial intelligence when it comes to content and real conversations. Share on XThis is not to say that all AI should be rejected, far from it.

As we have seen there are bots that perform beneficial tasks that simplify work and help us find information faster and others that seek to do evil, after all bots have been created by humans…

Bots mimic human behaviour even if they cannot replicate it exactly.

They cannot (for the moment) get double meanings, sense of humour, emotion, irony, nor can they resolve specific incidents with customers on their own, etc…

That’s why in order to win the battle against search engines and get a lot of traffic your content needs to be above all human, designed for your audience, answers specific questions and is translated correctly with all the different registers a language has.

0 Comments